LLM adoption continues at a pace dictated by the availability of talent (i.e. extremely constricted). Cost, efficiency and speed gains are easy to understand, but organisations adopting AI need to be mindful of the challenges that LLM use create.

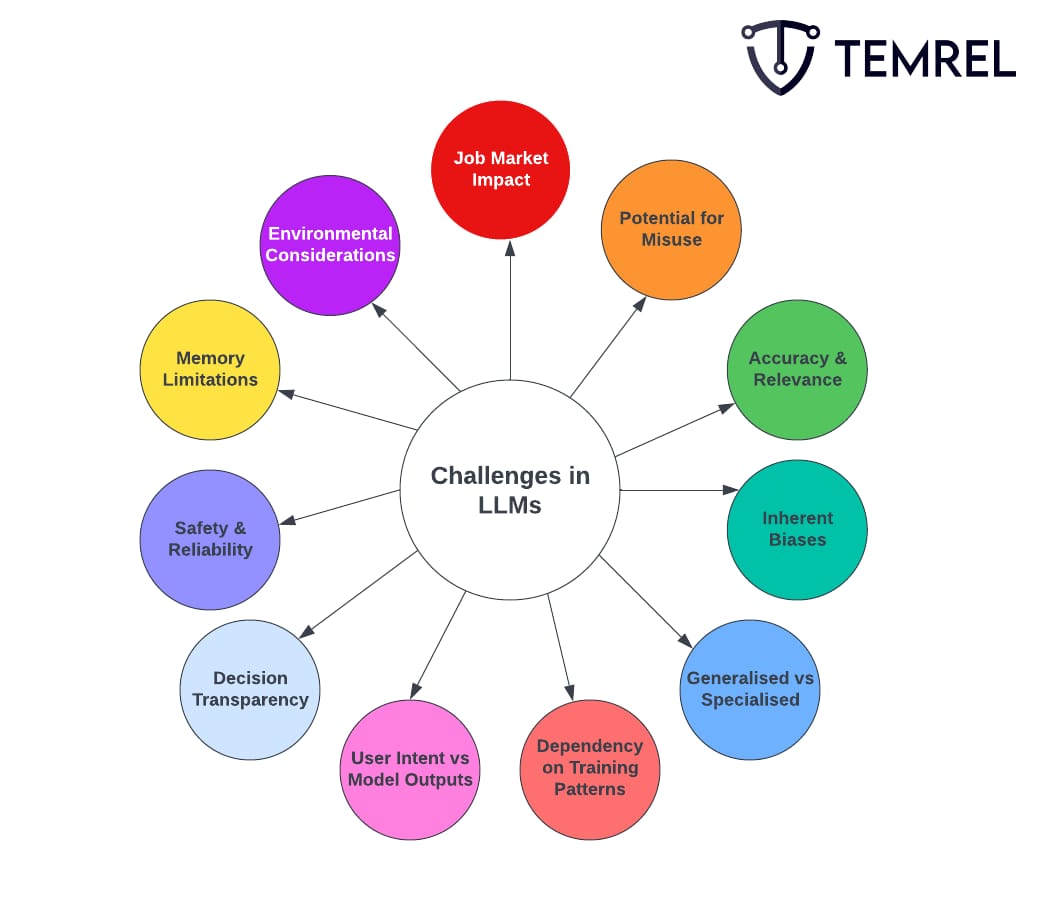

We’ve identified 11 such considerations:

Job Market Impact: The rise of LLMs has sparked discussions on their effect on employment. Industries, especially those focused on content creation and customer support, are undergoing transformation as tasks once done by humans are now being automated by these models. This disruption is almost certainly only the tip of the iceberg.

Potential for Misuse: The adaptability of LLMs in content generation poses risks. Malicious users might harness their capabilities to disseminate misleading narratives, generate spam, or even produce harmful content on a large scale. Fake news will become much more difficult to spot.

Accuracy and Relevance: There are instances where LLMs produce information that is incorrect, fabricated, or unrelated to the input. This is largely because their responses are not always grounded in the verifiable facts present in their training data. This phenomenon is also known as hallucination.

Inherent Biases: Given that LLMs are trained on enormous datasets sourced from the internet, they often inadvertently mirror biases present in these datasets. Striking a balance between rectifying these biases and maintaining the model's comprehensive utility is complex.

Generalisation vs. Specialisation: We’ve all seen generic social media posts. It’s become fairly easy to spot, in fact. While LLMs are engineered to address a broad spectrum of topics, their generalist nature can fall short in offering precise, domain-specific insights compared to models tailor-made for specific fields. Prompt engineering and fine-tuning mitigate this.

Dependency on Training Patterns: LLMs base their outputs on observed patterns in their training data. This mechanism can occasionally lead to outdated, overly broad, or even erroneous outputs, reflecting the vast and varied nature of their training sources. This is particularly brutal in models with a historic cutoff date (e.g. ChatGPT’s training dataset stopping at September 2021).

User Intent vs Model Outputs: LLMs can sometimes produce answers that, although accurate in some contexts, might deviate from the user's intended query. This underscores the importance of clear and precise user prompts. While not quite hallucination, this misinforms the uninformed.

Decision Transparency: For applications where it's vital to understand the reasoning behind decisions, the inherent complexity of LLMs poses challenges. Tracing back why a model arrived at a specific output is often intricate. Often the data scientists themselves don’t even know why.

Safety and Reliability: As LLMs find their way into critical applications, ensuring their consistent and safe operation is paramount. This necessitates the development of sophisticated testing and validation techniques, many of which are in their infancy.

Memory Limitations: Unlike humans, LLMs don't retain a long-term memory of past interactions. This characteristic mandates users to offer comprehensive context within each interaction for accurate results. This is dealt with by the application that wraps your model. ChatGPT, for example, retains the context of your chats, an extremely useful feature.

Environmental Considerations: The computational intensity of training advanced LLMs translates to substantial energy consumption. This raises environmental concerns, especially in the context of sustainable tech advancements.

Doom and gloom, right? We at Temrel still believe that, despite these challenges, AI presents a significant improvement over many of the current ways of doing things. There is no future in which organisations don’t adopt AI operations into the heart of their value delivery.